We take the headache out of digital compliance.

We turn your digital legal obligations into design solutions, so you can focus on growing your business.

Find digital compliance issues

Our AI solutions find dark patterns and other digital legal violations on sites and apps. Avoid fines, class actions and reputational risks

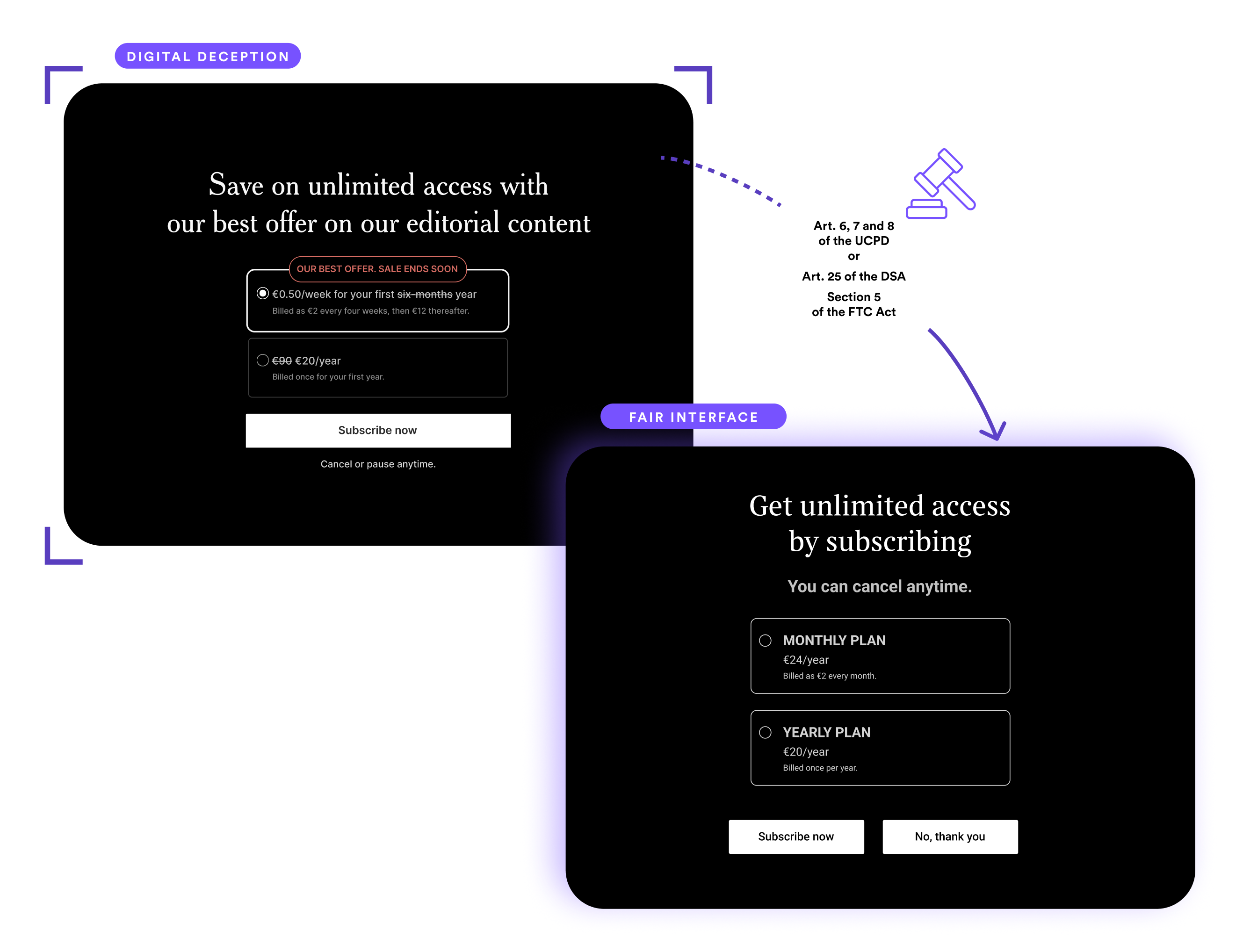

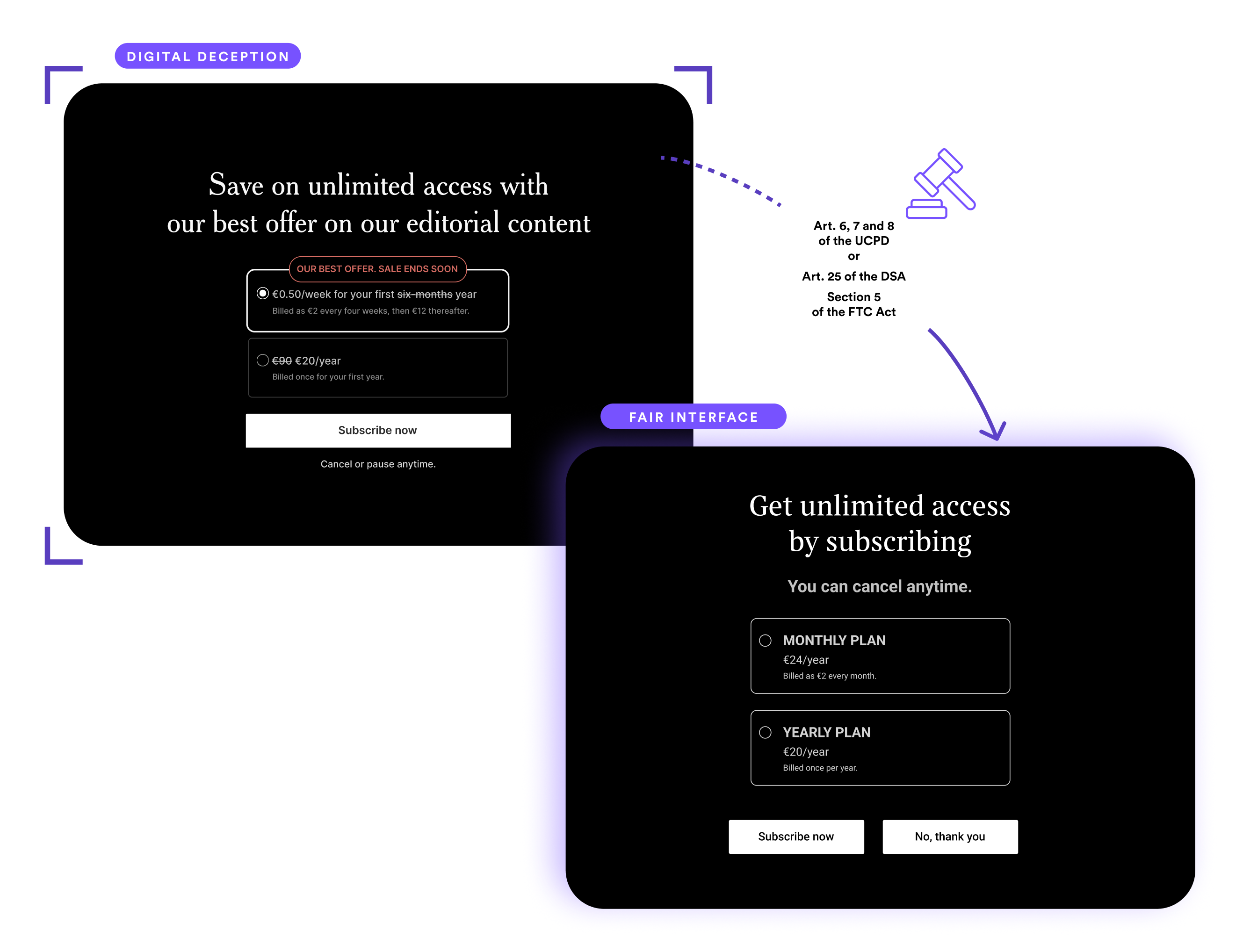

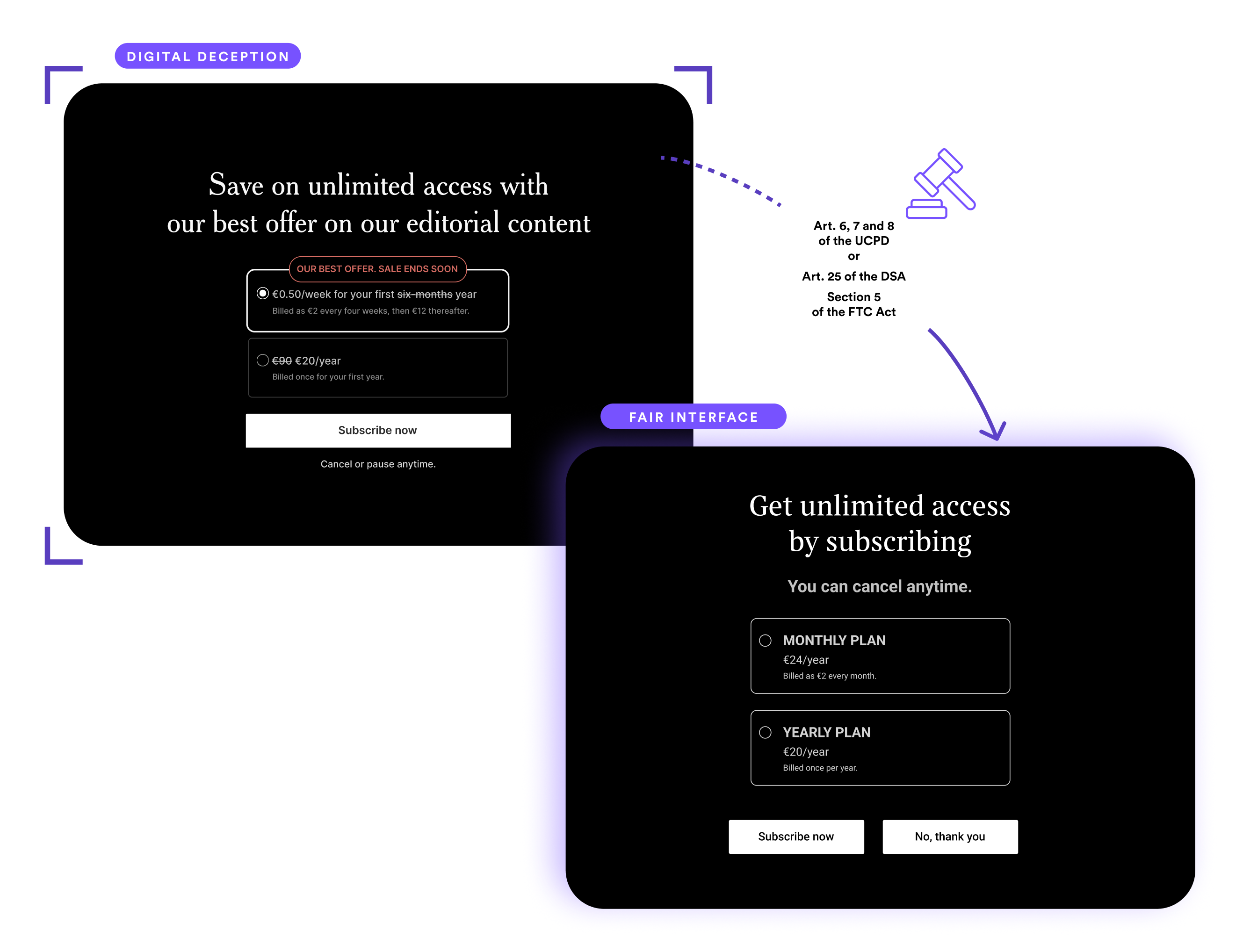

Fix digital violations with design solutions

Our library Fair Patterns translate legal advice into interfaces. Happier users, more sustainable growth.

Create compliance by jurisdiction

Our AI agent empowers your designers and developers to avoid creating new dark patterns at the mockup stage

Supercharge your enforcement against dark patterns

We help you map, prioritize and carry out enforcement actions using scientific, verifiable evidence.

Strengthen regulatory oversight

Enhance your ability to oversee and regulate digital spaces by identifying dark patterns with precision.

Empower evidence-based actions

Use cutting-edge technology to analyze data, providing robust evidence for enforcement actions.

Advance legislative compliance

Facilitate a proactive regulatory approach that sets a benchmark for digital ethics and consumer protection.

Power up your compliance & litigation on dark patterns

Rigorous insights from psychology, neuroscience and UX design that can transform your cases. We find, test and fix dark patterns.

Supercharge your digital compliance audits with AI

Our AI solution finds dark patterns and other digital violations across any taxonomy. It pre-identifies applicable laws and risks so you can exercise your judgement and easily provide your legal advice

Enhance your case strategy

Gain a competitive edge in litigation with science-backed insights into user behavior and dark patterns.

Get the silver bullet in litigation cases and before regulators

Our Dark Patters User Testing Lab provides scientific evidence of manipulation and deception - or the absence thereof. The ultimate evidence that will make a differece in your case.

Solutions

Deceptive Design AI Agent

Ever wanted to prevent dark patterns from even becoming live? We've got you! That's why we created our Deceptive Design AI Agent. It empowers designers and developers to avoid creating new dark patterns at the mockup or integration stage.

Dark Pattern Screening

Our AI solution finds dark patterns and other digital violations on sites and app. It provides a risk prioritization and easily implemented design solutions.

Figma Plug-in

Our Figma Plug-in enables your designers to scan their designs at the mockup stage and fix any potential digital violations before they go live.

Consent Scanner

Gain instant insights on cookie practices across digital platforms, helping you uphold privacy standards and ensure compliance across websites.

Fair User Lab

A range of qualitative, quantitative and eye tracking research services to understand if products use any problematic dark patterns

Identifies both text, design and code issues, and understands context

Issues spotting + digital solutions

Legal advice easily embedded into interfaces

AI agent to avoid creating new breaches

Training

Unlock the power of fair design with our comprehensive training programmes.

Scheduled Masterclasses

Join other professionals for group training on one of the following dates

On-Demand Courses

Get in touch to schedule a private training course for your team

Free resources

Publications

Research and articles about dark patterns, user behaviour and law.

Regulations

Laws and regulations from around the world that relate to dark patterns.

Cases

Legal cases and enforcement actions from around the world that relate to dark patterns.

Jobs

Employment opportunities that relate to dark patterns and related areas

Events

Coming events about laws, dark patterns, tech ethics and related areas.

Upgrade your compliance knowledge

Speaking & Media

Marie Potel-Saville, Co-Founder & CEO of FairPatterns, is available for keynote speeches and speaking engagements.

Expertise Areas

• Dark Patterns & Digital Manipulation

• AI Ethics & Trustworthy Design

• Digital Fairness Regulations (GDPR, DSA, Digital Fairness Act)

• Consumer Protection in Digital Ecosystems

• Ethical AI Implementation

Invite Marie to Speak

Bring ethical design to the stage with Marie’s keynote on dark patterns and user trust.

Fair Patterns Podcast and Blog

Exploring the intersection of ethics, design, and compliance in the digital world

A Q&A with Marie Potel-Saville on Dark Patterns and Fair Design

Awards & Associations

Meet Our Team

Our expert auditors, researchers, and legal professionals.